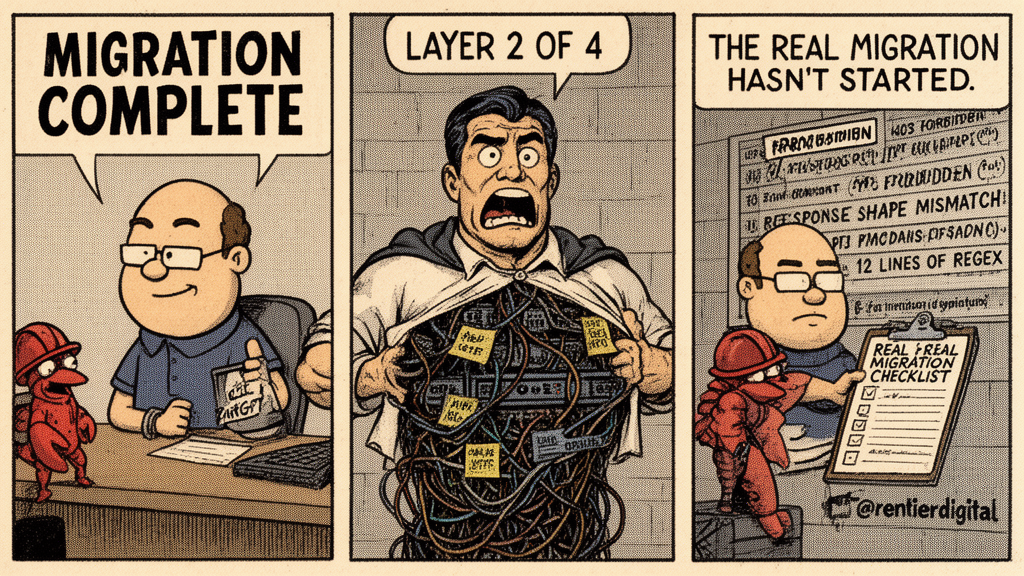

The #QuitGPT Movement Hit 4 Million. The Real Migration Hasn't Started Yet.

Anthropic's Import Memory tool moves your preferences in 60 seconds. OpenAI's Assistants API dies in August. One migration is a hashtag. The other is a rewrite.

Updated March 19, 2026: QuitGPT has grown from 1.5 million to 4 million participants. ChatGPT's US app market share dropped to 45%. The resistance got infrastructure. The lock-in problem didn't change.

Monday morning, Claude goes down. Everything. The chatbot, the API, Claude Code, all of it. Two thousand reports on Downdetector in under an hour. My X feed is a wall of people who switched from ChatGPT over the weekend discovering that their brand-new provider is already flat on its back.

TL;DR: Anthropic's Import Memory tool moves your ChatGPT preferences to Claude in 60 seconds. But preferences aren't infrastructure. When Anthropic killed my $200/month agent setup overnight, no import tool saved me. I rebuilt MCP servers, prompt contracts, CLI wrappers, and monitoring from scratch. Meanwhile, OpenAI's Assistants API sunsets in August, forcing thousands of devs into a rewrite whether they join #QuitGPT or not. This article maps the 4 layers of AI lock-in, shows what actually migrates vs. what doesn't, and gives you a portability framework so you're never stuck when the next provider decides to change the rules.

I opened my terminal, typed "anything broken" into my MCP server, got my answers in four seconds. Five SaaS apps running. n8n workflows humming. None of my production infrastructure cares whether claude.ai is having a bad day.

Four million people have now pledged to quit ChatGPT. Claude hit #1 on the App Store, downloads surged to 20 times their January levels, and daily signups broke Anthropic's all-time record every day for a week straight. ChatGPT's mobile app market share in the US dropped from 69% to 45% in twelve months. The bleeding is real.

Four million people have now pledged to quit ChatGPT. Claude hit #1 on the App Store, downloads surged to 20 times their January levels, and daily signups broke Anthropic's all-time record every day for a week straight. ChatGPT's mobile app market share in the US dropped from 69% to 45% in twelve months. The bleeding is real. And honestly, I get it. When OpenAI signed with the Pentagon hours after Trump blacklisted Anthropic, it turned my stomach. Same feeling I got when Steinberger joined OpenAI right after preaching that frontier models were the only path forward.

There are moments when you vote with your feet, and that's a perfectly good thing to do.

The #QuitGPT movement is respectable.

But voting with your feet and migrating your infrastructure are not the same operation. Anthropic even shipped a tool to import your ChatGPT memories in 60 seconds. It's clever. It's well-made. And it solves roughly 2% of the problem. Meanwhile, OpenAI's Assistants API dies on August 26th, and thousands of devs with entire products running on it haven't gotten an import button. They've gotten a countdown.

What Import Memory Actually Moves (And What It Doesn't)

I tried the import tool. Took about ninety seconds. You go to Claude settings, hit "Start import," Claude gives you a prompt, you paste it into ChatGPT, ChatGPT dumps everything it knows about you into a code block. Name, job, preferences, recurring topics, the fact that you hate bullet points. You copy it, paste it into Claude, wait 24 hours. Done. Claude picks up roughly where ChatGPT left off.

And for someone who uses ChatGPT the way you use Google Maps — ask a question, get an answer, close the tab — this is genuinely great. The switching cost that kept casual users locked in just evaporated. Anthropic solved it in a week while their competitor was busy signing defense contracts. Well played.

But I opened the exported block and looked at what actually came through. Tone preferences. Personal context. Topics I discuss often. Now look at what didn't come through: my custom system prompts. My API integrations. My MCP server configs. My CLI wrappers. My prompt contracts. My n8n workflows that reference specific model behaviors. My monitoring dashboards wired to Anthropic's response shapes. Ugh.

Nobody has usage data on how many of those new users actually ran the import. Every outlet covered the announcement. Nobody measured adoption. That gap tells you something about the current discourse: the narrative is about the gesture, not the execution.

The gesture is real. The execution is layer 1 of 4.

Two Weeks Ago, I Lived the Migration Nobody Talks About

My OpenClaw agent ran on Claude Max for six weeks. Emails, calendar, Telegram, a multi-model system across five SaaS products. Then one morning, every request timed out. No warning email. No grace period. A wall of 403s and a vague Terms of Service notice.

Anthropic decided that piping a Max subscription through anything that isn't Claude Code was a bannable offense. Thousands of devs found out the same way I did.

I rebuilt. Two $5 VPS instances, Kimi K2.5 as primary model, MiniMax M2.5 as fallback. The full rebuild from $200 to $15 took days, not minutes. Not because the models were hard to swap. Because the infrastructure around them was welded to a specific provider.

The thing nobody tells you about forced migrations: it's not the big pieces that eat your time. It's the small ones. I had a CLI wrapper that parsed Claude's response format to extract tool calls. Twelve lines of regex. When I switched models, the response shape changed, and those twelve lines silently broke. No error — just wrong output that looked right enough to pass a quick glance. I caught it three days later when a monitoring alert fired on data that made no sense. Three days of subtly corrupted output because of twelve lines nobody thinks about.

Import Memory would have saved about 2% of that migration. The other 98% was infrastructure nobody thinks about until it breaks.

The Mechanism: Lock-In Follows the Stack

The #QuitGPT migration is the financial equivalent of moving your checking account while your shell companies stay registered in the Caymans. The visible part moves instantly. The structural part doesn't move at all.

This isn't unique to AI tools. It's how lock-in works everywhere in tech. Migrating from AWS to GCP: the compute instances move, the IAM policies and service-specific integrations take months. Switching from React to Vue: the UI components rewrite, the state management and build tooling take weeks. The pattern is always the same.

The deeper the integration, the higher the switching cost.

In AI tooling, there are four layers. The top one is trivial to migrate. Each layer below it gets exponentially harder. And the 1.5 million people who pledged to quit ChatGPT? They solved exactly one of them.

The Four Layers of AI Lock-In

Layer 1: Conversational memory

Your preferences, your tone, your personal context. The chatbot knowing you like concise answers.

Everything migrates. Anthropic's Import Memory handles this in 60 seconds, and it works. This is the layer everyone is talking about, and it's also the only layer that has a solution. Time to switch: minutes.

Layer 2: API integrations

This is where it stops being a hashtag and starts being engineering work. API keys, model calls, SDKs, billing, rate limits — the code that connects your product to a specific provider's endpoints. None of it migrates automatically. You swap keys, adjust parameters, rewrite the parts that depend on provider-specific behavior. Fine-tuned OpenAI models don't run on Anthropic. Custom GPTs have no equivalent on the other side.

And this is what makes the August 26 deadline urgent even if you don't care about Pentagon contracts at all. OpenAI's Assistants API is shutting down — deprecated since last August, full shutdown in five months. Every developer who built products on it has to rewrite regardless of where they stand on the QuitGPT discourse. The only question is whether they rewrite toward OpenAI's new Responses API or use the forced rewrite as an opportunity to switch entirely. Admit it, if you have code on that API, you've been pushing this decision down the road.

Time to switch: hours to days. Weeks if you have fine-tuned models.

Layer 3: Agent infrastructure

The harness. MCP servers, prompt contracts (your CLAUDE.md or system prompts), CLI wrappers, n8n or Make workflows wired to specific models, memory layers, cron jobs, monitoring. The scaffolding that makes AI useful in production instead of just in a chat window. Almost none of it migrates.

MCP is technically a standard protocol, and yes, OpenAI, Google, and others have adopted it. In theory, an MCP server works with any provider. In practice, I spent 16 commits and 4 hours debugging OAuth for Claude's MCP implementation alone. Implementing the same server for a different provider means re-debugging a different set of undocumented constraints. Same time investment, zero reuse.

The part people consistently miss is the tool descriptions. A tool description written for Claude's parsing behavior doesn't perform the same way on GPT or Gemini. The prompt contracts framework I use is calibrated for how Claude interprets scope, constraints, and expected output. Porting that to another model isn't a find-and-replace. It's re-tuning every contract through trial and error. Same for agent harnesses — the progressive disclosure, the CLAUDE.md structure, the tool selection logic all break in subtle ways you only discover in production.

Time to switch: days to weeks.

Layer 4: Institutional knowledge

Everything you know about your model that isn't written down anywhere.

Eight months of daily Claude Code usage taught me which phrasings Claude treats as hard constraints versus soft suggestions. Which instruction formats it follows versus which it creatively reinterprets. How to structure a CLAUDE.md so the model reads it as a navigation layer instead of skimming it like a README. When I switched my agent stack to Kimi K2.5 after the Anthropic shutdown, the same prompt contracts produced different results. Not wrong — just different enough that I had to re-tune every one of them. A constraint that Claude interpreted as "never do X" got read as "prefer not to do X" by a different model. That kind of drift didn't throw errors. It just quietly degraded output until something fired in monitoring that made no sense.

None of that knowledge transfers. There's no export button for intuition.

Import Memory transfers what the chatbot knows about you. It doesn't transfer what you know about the chatbot.

Time to switch: weeks to months.

The QuitGPT crowd solved layer 1 in 60 seconds and called it a migration.

Build Portable, Or Build Twice

Every layer has a defense. Here's how to decouple.

For Layer 2, the move is to abstract your model calls. Don't hardcode anthropic.messages.create or openai.chat.completions.create into every file — put a routing layer in between. I run Kimi K2.5 as primary with MiniMax M2.5 as fallback, and the model is a parameter, not a constant. When Anthropic killed my setup, that routing layer is what made the rebuild take days instead of weeks.

For Layer 3, write contracts for behavior, not for a model. Instead of "Claude responds well when you..." write "The agent must produce X output given Y constraints with Z verification." The contract describes what you need, not which model you're talking to. You'll still tune per model, but the contract survives a provider swap — the tuning is a weekend, a contract rewrite from scratch is a month. Keep your CLI wrappers model-agnostic too: stdin, stdout, the model as an environment variable.

For Layer 4, test your workflow on two models once a quarter. Not because you're planning to switch. Because the test reveals which parts of your setup are portable and which are welded. When the provider changes the rules — and they will — you'll know exactly where the welds are.

Across all of it: treat every provider relationship as temporary. This sounds cynical. It's what I learned from having Anthropic shut down my infrastructure while I was asleep. They didn't owe me a warning. I didn't owe them permanent loyalty. Build accordingly.

The best migration strategy is the one you never need.

The Resistance Got Organized. It’s Still Layer 1

While I was rebuilding my agent stack on $5 VPS instances, the protest side of the migration got serious infrastructure of its own.

Scott Galloway launched Resist and Unsubscribe, a targeted boycott across ten tech companies, OpenAI included. Someone built an impact calculator that tells you exactly how much valuation damage your canceled $20/month subscription inflicts. The math is simple and satisfying: each annual cancellation is $240 in lost revenue, multiplied by OpenAI’s reported 40x revenue multiple, and suddenly your protest has a price tag in the thousands. QuitGPT went from a Reddit post to a movement covered by MIT Technology Review, France 24, and CNN. Four million participants and counting.

And here’s the part that’s genuinely new: this might be the first tech boycott where the alternatives are credible. Nobody who quit Facebook in 2018 found a replacement that actually worked. Nobody who left Twitter for Mastodon stayed longer than a month. But the person who cancels ChatGPT today can open Claude or Gemini and lose almost nothing in their day-to-day usage. The switching cost for casual users is, for the first time, close to zero.

That’s layer 1 working exactly as intended.

It still doesn’t touch layers 2 through 4. The developer with MCP servers, prompt contracts, and six months of model intuition isn’t switching because Scott Galloway told them to. They’re switching (or not) based on whether their infrastructure survives a provider change. The boycott moves the chatbot users. The portability framework moves the builders.

What Actually Matters

Four million pledges. An NYU professor running a dedicated unsubscribe campaign with its own impact calculator. ChatGPT’s app market share cut nearly in half. Claude downloads at 20x their January levels.

But the thing about protest-driven platform migrations: they're loud, they're fast, and they don't last. Signal got 7.5 million protest downloads after the WhatsApp privacy scare in 2021. Researchers never published the retention data, which tells you everything about the retention data. When Twitter users fled to Mastodon in 2022, nearly 80% stayed active on Twitter with four times the engagement. Digg to Reddit is the only protest migration that held, and it held because Reddit was a better product, not because people were angrier.

The #QuitGPT fury will cool. The App Store ranking will settle. The Pentagon discourse will move to the next cycle. What won't change: if your workflow depends on a web chatbot, you're at the mercy of every outage, every pricing change, every policy shift. The devs who didn't notice Monday's outage are the ones whose infrastructure is decoupled from the provider. Workflows running on n8n. Monitoring through MCP servers on their own VPS. Model routing that doesn't care which provider is having a bad day.

The QuitGPT movement asked the right question: should you trust a single company with your AI workflow? The answer is no. But not for the reasons Reddit thinks. Not because of Pentagon contracts. Because of lock-in. Because the provider will always, eventually, change the rules.

The real migration hasn't started. For most people, it never will. The ones who build portable won't need it anyway.

Sources & links:

- Anthropic Import Memory tool

- QuitGPT pledge data (1.5M claimed, 17K verified): Cybernews, QuitGPT.org

- Claude download surge (20x January levels): Similarweb via CNN (March 3, 2026)

- Claude #1 App Store, signups record, +60% free users, 2x paid subs: TechCrunch / Sensor Tower (March 1, 2026)

- ChatGPT uninstalls +295%: Sensor Tower via TechCrunch (March 2, 2026)

- OpenAI Assistants API sunset: OpenAI developer docs (August 2025 announcement, full shutdown August 26, 2026)

- Signal download data: Sensor Tower via CNBC (January 2021)

- Twitter/Mastodon migration study: Jeong et al. 2023, arXiv

I write about building AI infrastructure that survives when the provider doesn't. No hype, no hashtags, just the stuff that breaks and how I fixed it. Follow if that's useful.

That cover image was made by AI. I tried hiring a real illustrator once but they wanted to "explore the emotional palette of infrastructure lock-in" and I just needed a picture of a server.

When AI providers change the rules overnight, your infrastructure is the real migration challenge. My newsletter breaks down how to stay portable.